Here are steps to Install and Configure Skyline Collector 3.1 in VCF 3.11 Environment

NOTE: Some of the Content Might be Pixelated or the Data is Changed to Protect Existing Environments at my Organization

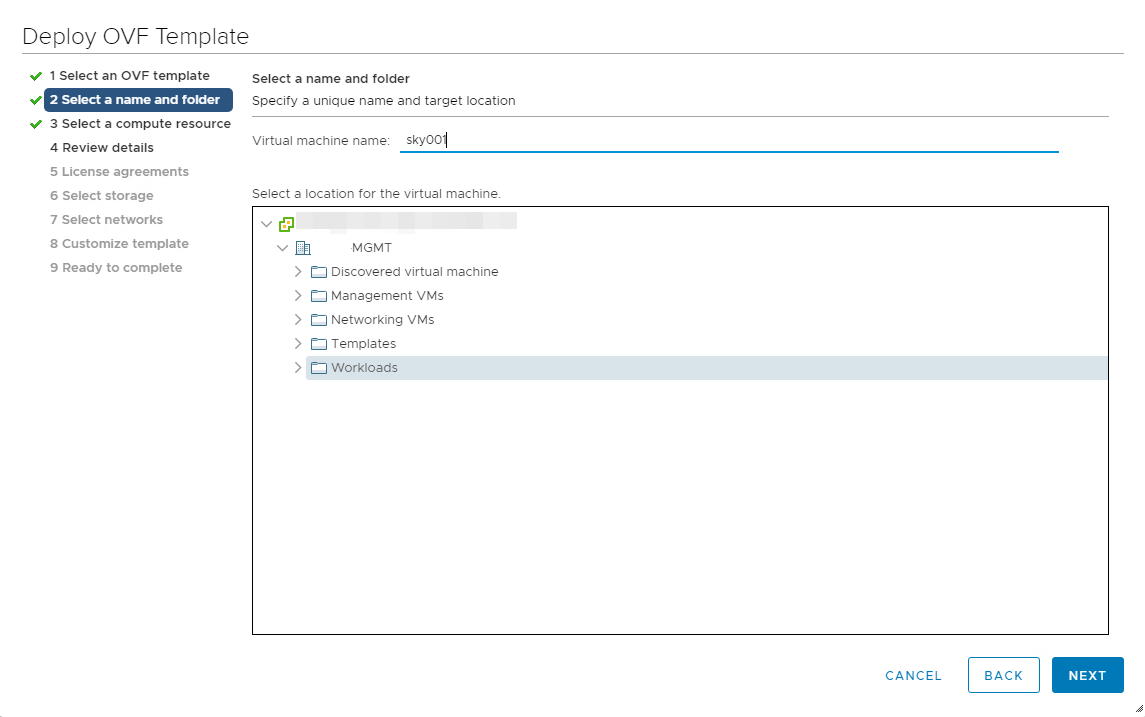

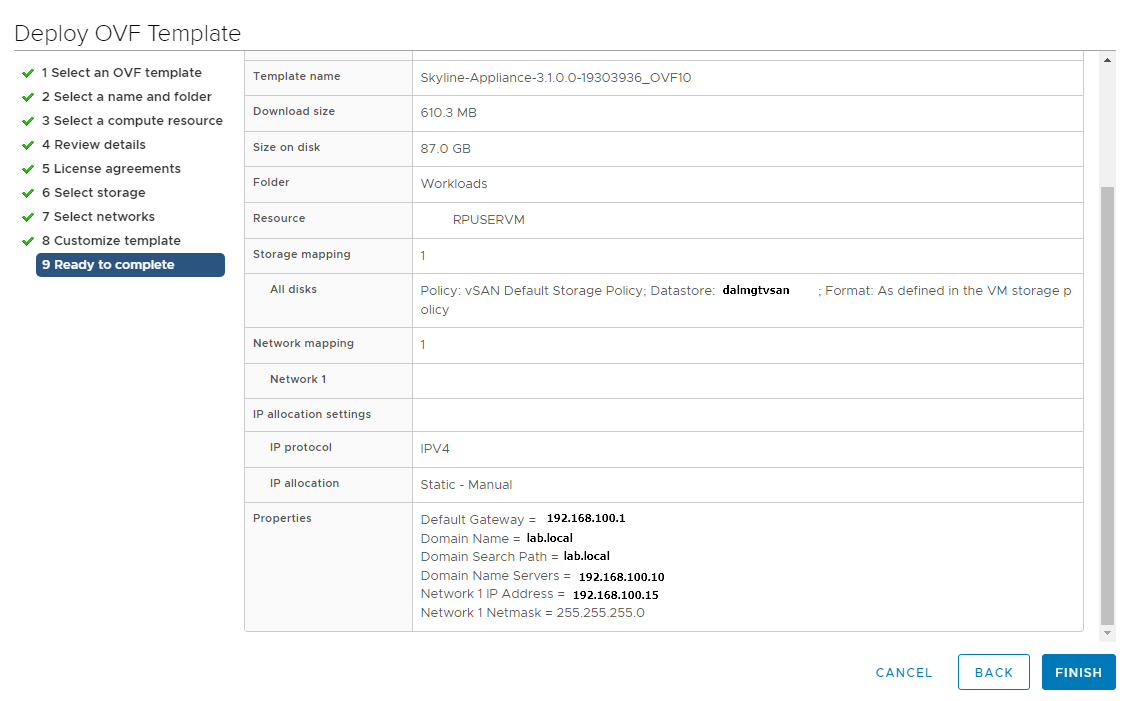

First you will have to download the skyline ova from VMware portal, the current filename as of this post is Skyline-Appliance-3.1.0.0-19303936_OVF10.ova

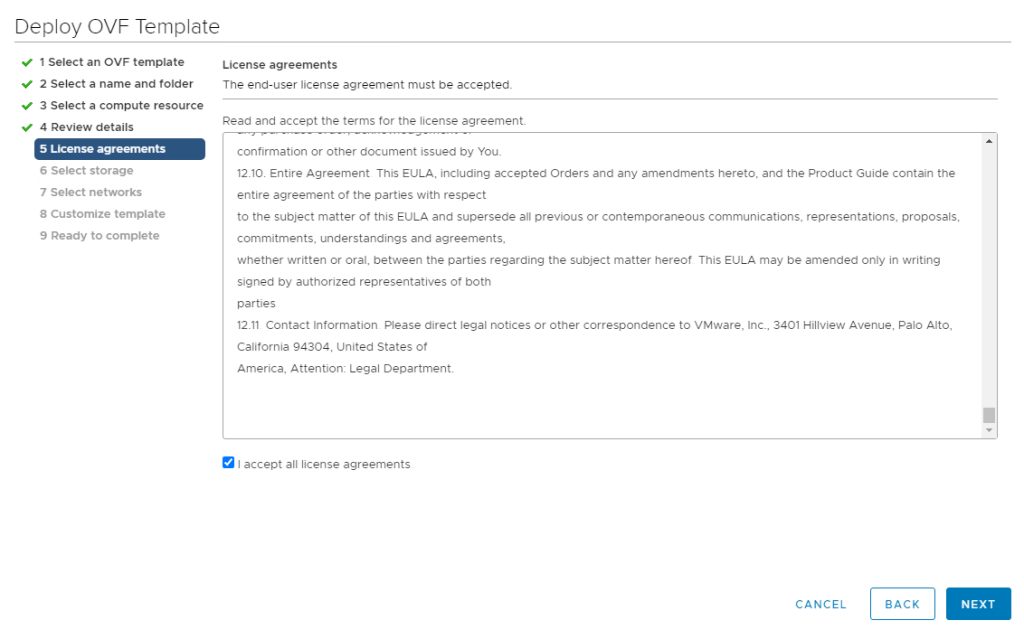

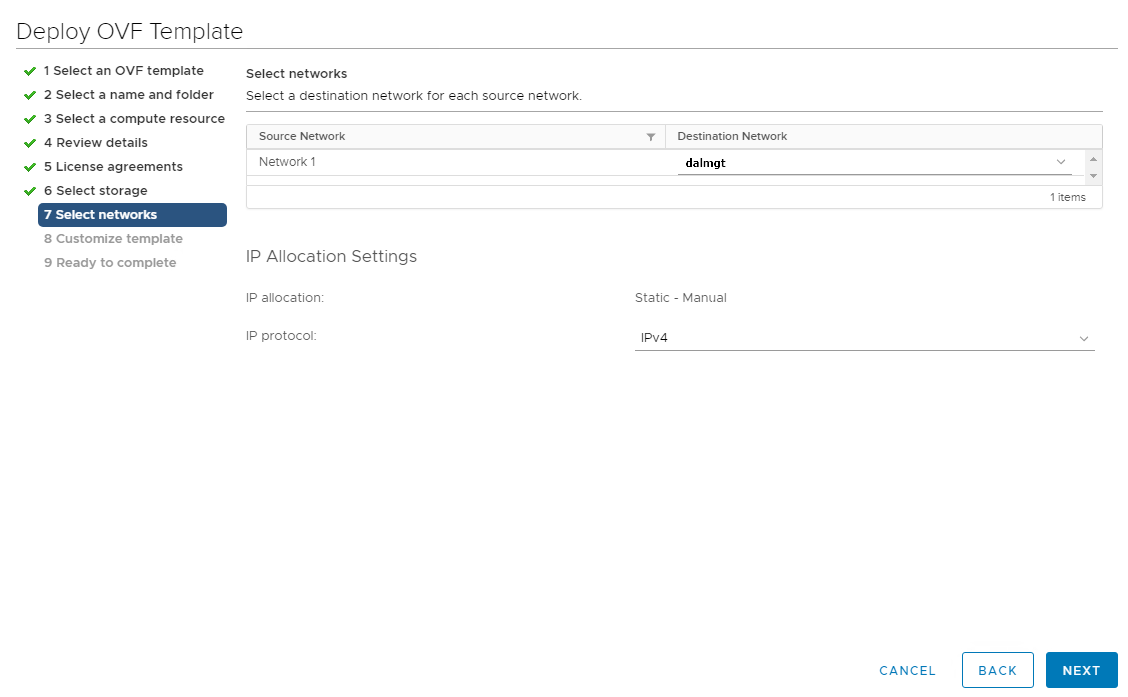

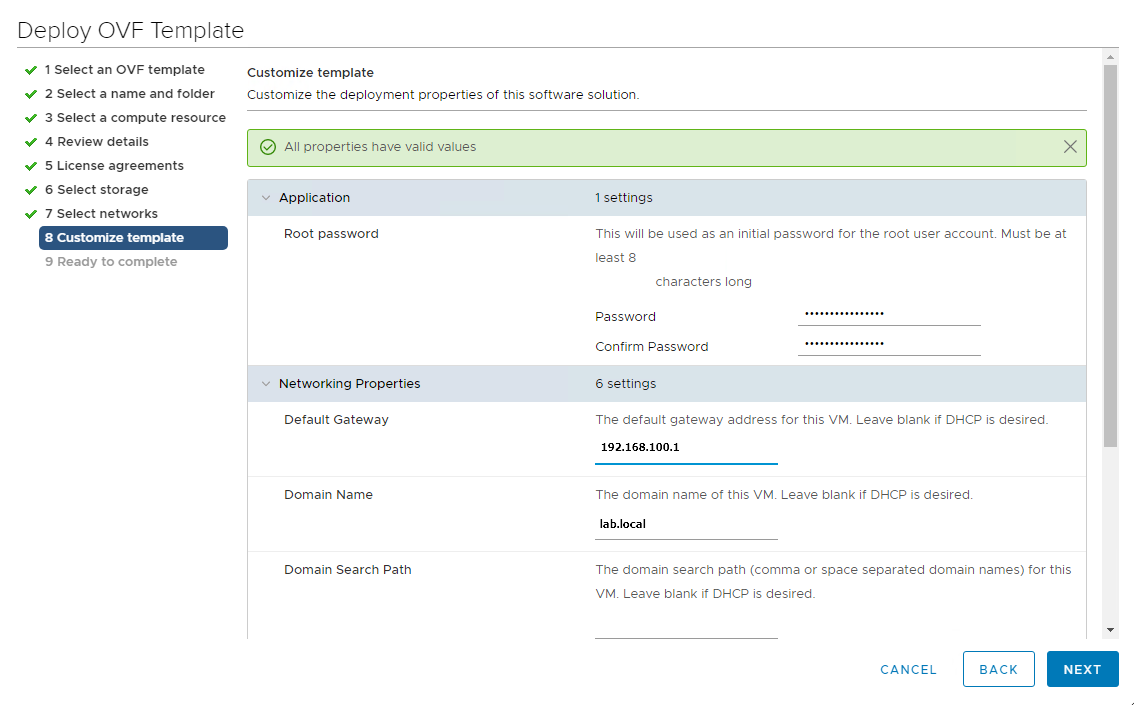

Deploy the OVA file in the vcenter server

This concludes the deployment of the skyline appliance

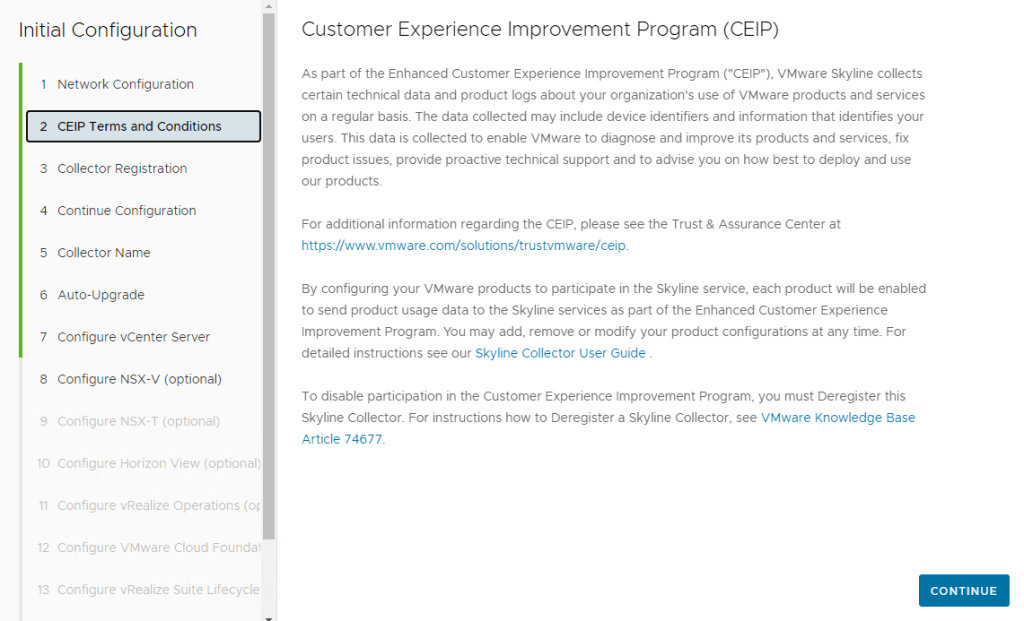

Next we go to the skyline appliance Web UI at https://skyline_hostname_fqdn

Login using the default user admin and you will need to configure the skyline collector as below:

You can check the option “Hostname Verification”to make sure that https connection is enabled to the collector

Make sure you provide the correct Collector Registration Token available at VMware Cloud Console

Provide a Friendly Name for the Collector, This will be displayed in the VMware Cloud Console

In this window you can opt in so that the appliance auto-upgrades itself at a set day or time.

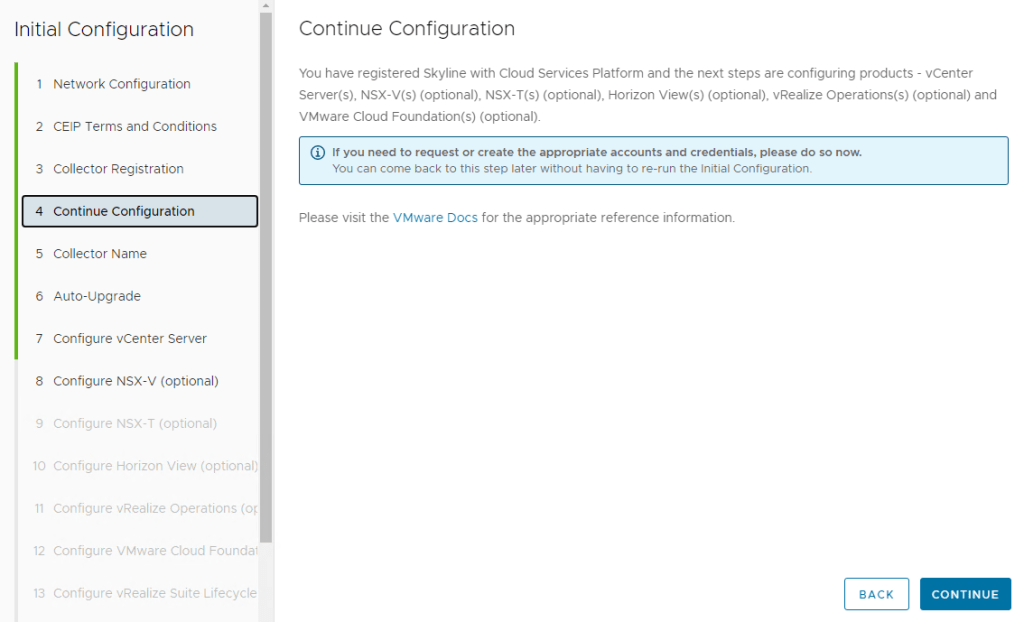

Next, you can configure the vcenter, nsx-v/nsx-t, horizon view, vrops, vcf (sddc manager) and vrslcm components with their hostname and credentials.

This is the final step after configuring the required components and finally we click finish.

with this we complete the deployment and configuration of VMware Skyline Collector version 3.1

At the time of this writing the Skyline Collector Version is 3.2